Implementation and Evaluation of CYPHONIC client focusing on Sequencing mechanisms and Concurrency for packet processing

埋め込みコード

iframe

<iframe src="https://www.ren510.dev/slides/embed/implementation-and-evaluation-of-cyphonic-client-focusing-on-sequencing-mechanisms-and-concurrency-for-packet-processing/" title="Implementation and Evaluation of CYPHONIC client focusing on Sequencing mechanisms and Concurrency for packet processing" width="100%" style="aspect-ratio:1.778" frameborder="0" allow="clipboard-write" allowfullscreen></iframe>script タグ

<script defer class="ren510-slide-embed" data-slug="implementation-and-evaluation-of-cyphonic-client-focusing-on-sequencing-mechanisms-and-concurrency-for-packet-processing" data-ratio="1.7777777777777777" src="https://www.ren510.dev/static/slides/embed.js"></script>🤖 AI による要約 ✨

- P.1 — Title slide. Presentation at GCCE 2023 on CYPHONIC client implementation focusing on sequencing and concurrency for packet processing.

- P.2 — Presentation outline covering P2P communication solutions, CYPHONIC overview, challenges, objectives, proposed schemes, and evaluation.

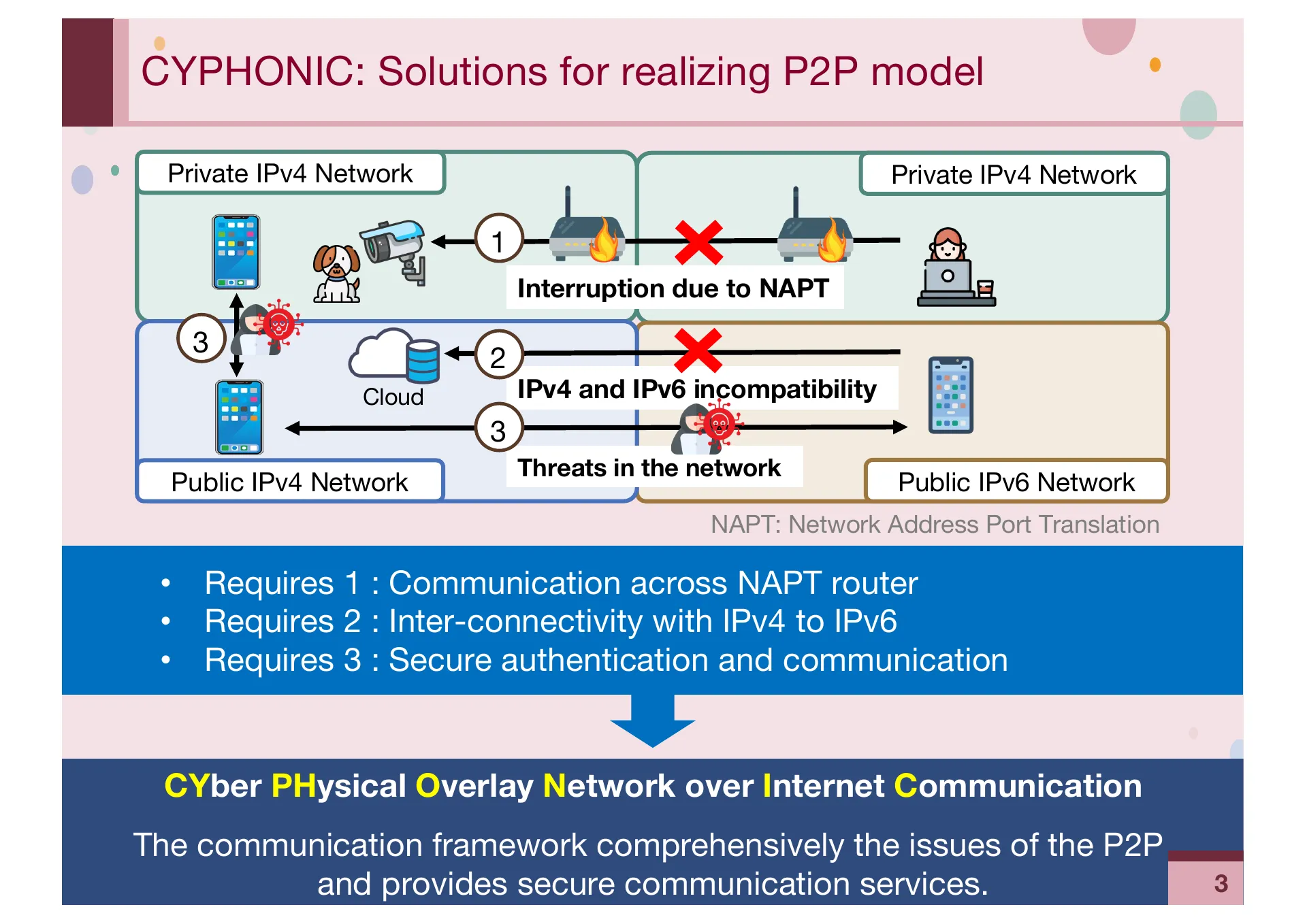

- P.3 — Challenges for realizing P2P communication: NAPT traversal, IPv4-IPv6 incompatibility, and network security threats.

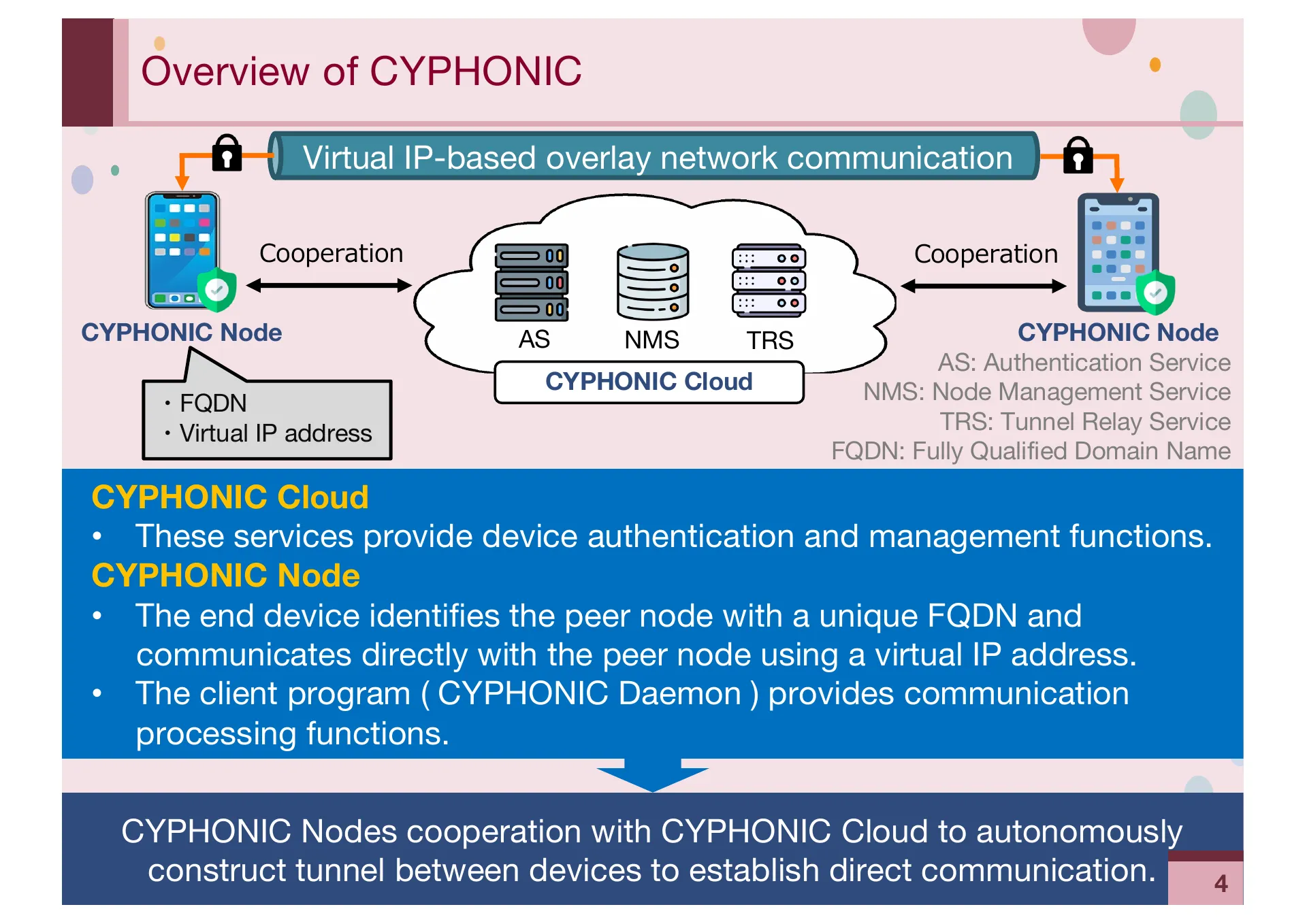

- P.4 — Overview of CYPHONIC. Virtual IP-based overlay network with AS, NMS, and TRS cloud services for device authentication and management.

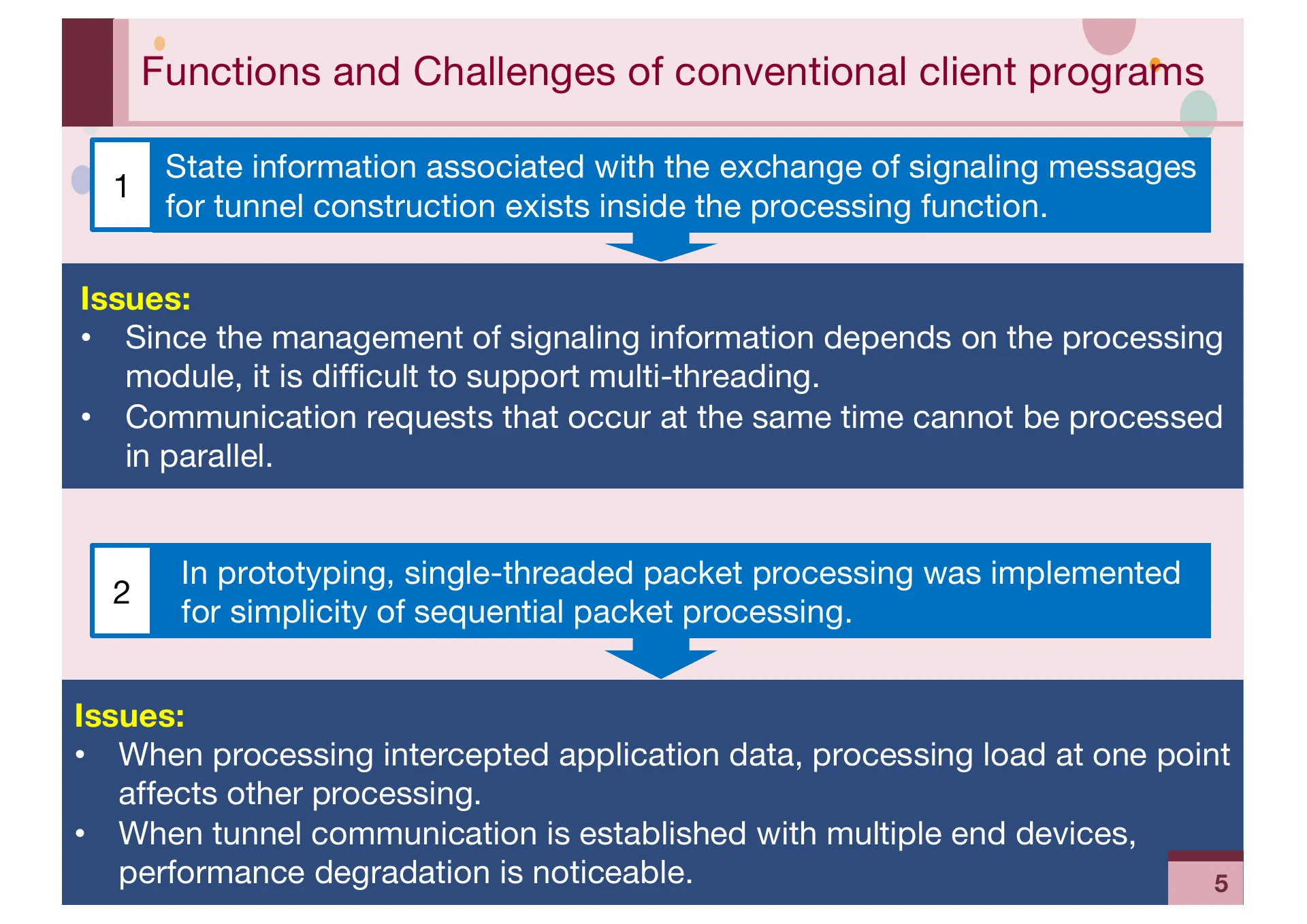

- P.5 — Challenges of conventional client programs. State information coupled to processing modules prevents multi-threading. Single-threaded packet processing causes load concentration.

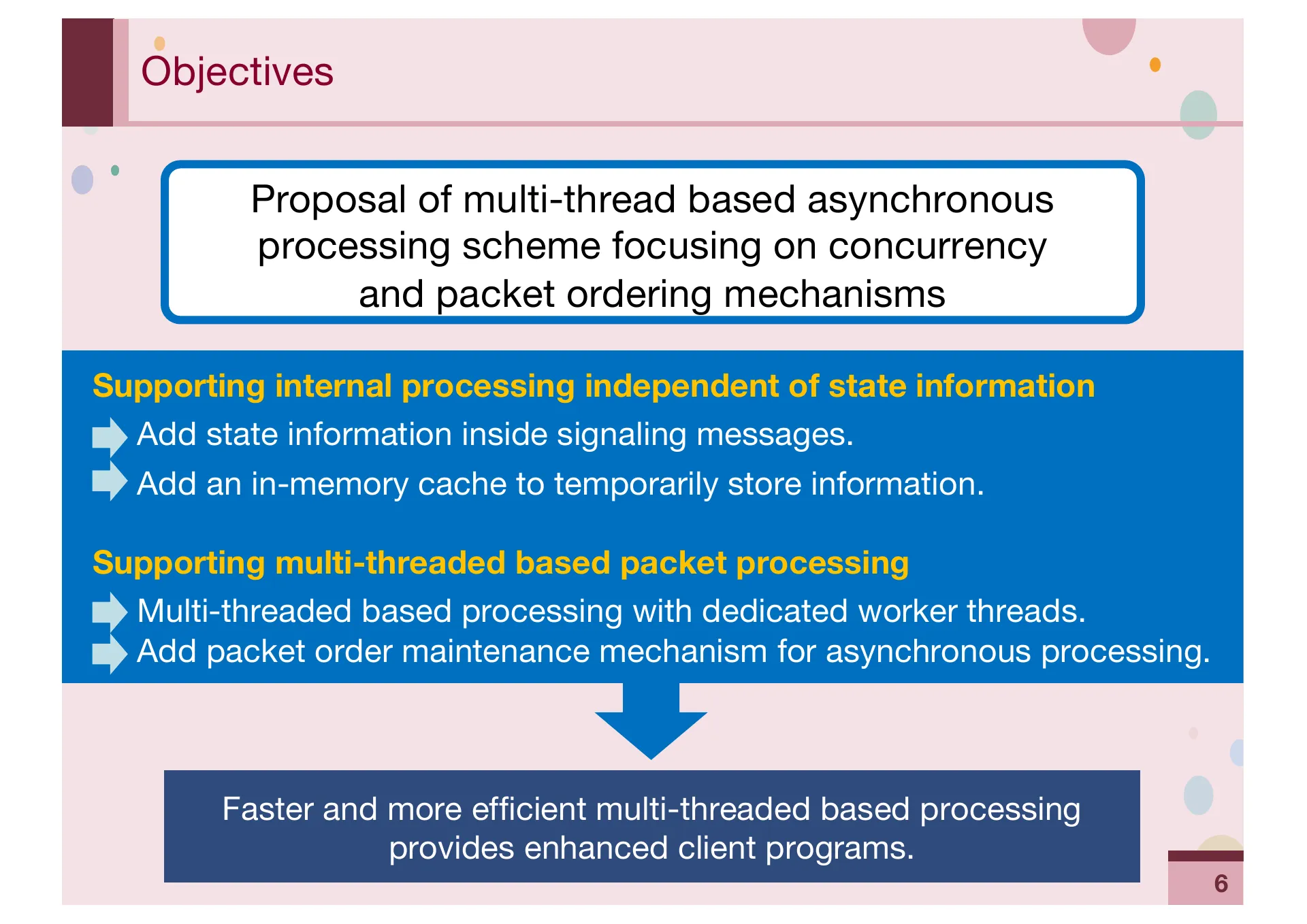

- P.6 — Research objectives. Proposal of multi-thread based asynchronous processing scheme focusing on concurrency and packet ordering mechanisms.

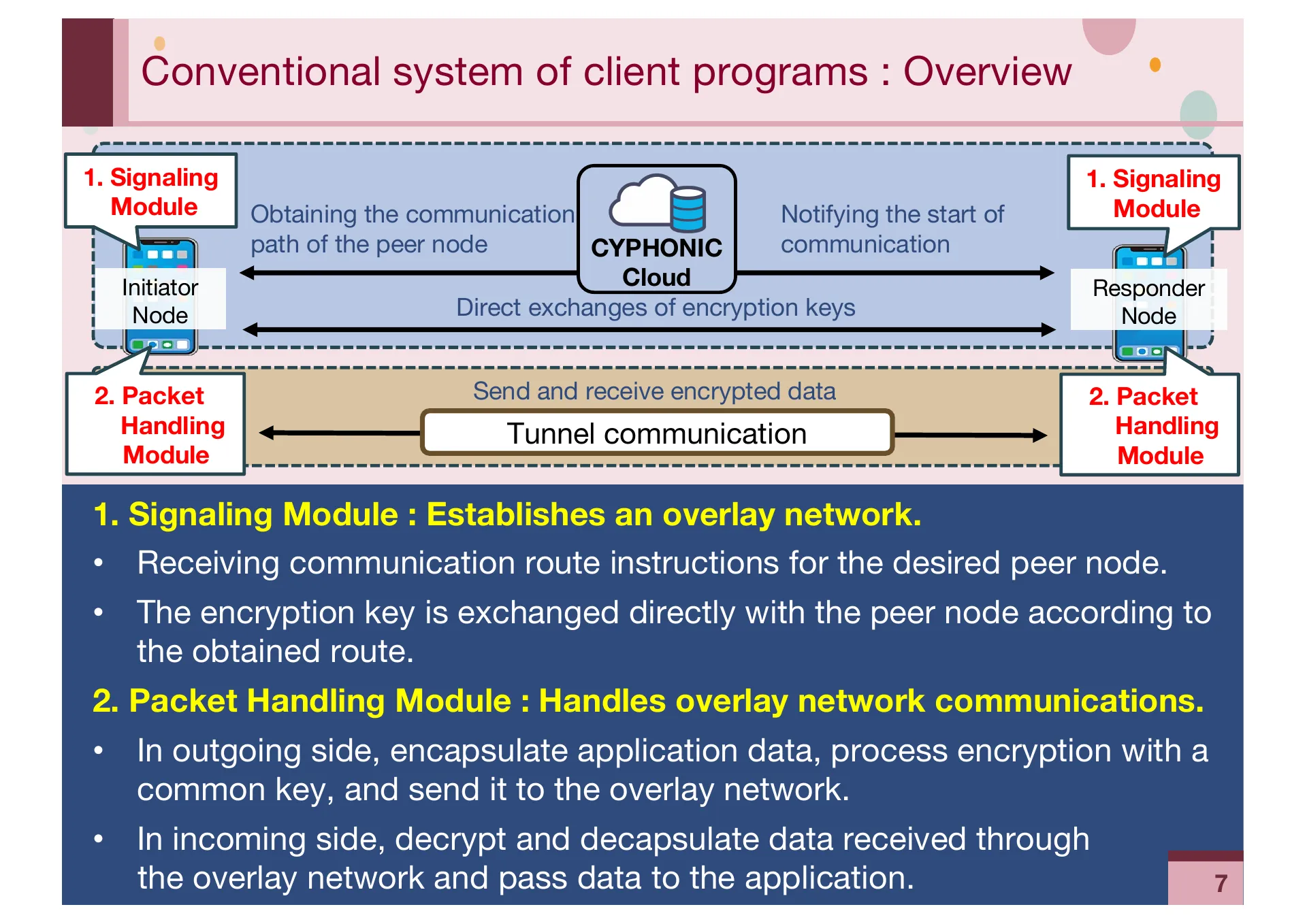

- P.7 — Conventional system overview. Signaling Module establishes overlay network, Packet Handling Module processes encrypted tunnel communication.

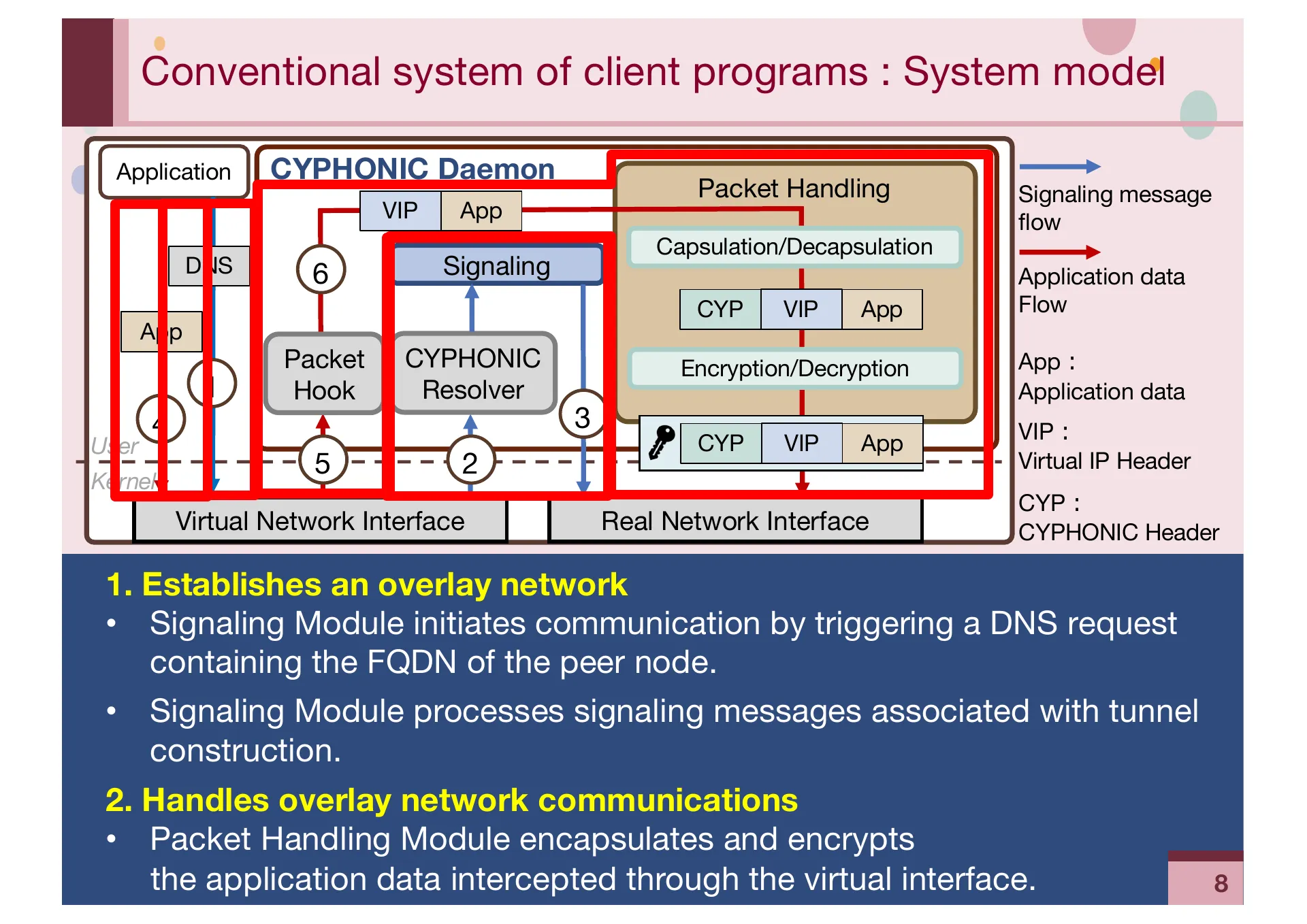

- P.8 — Conventional system model. Internal flow from DNS-triggered signaling initiation through packet handling via virtual network interface.

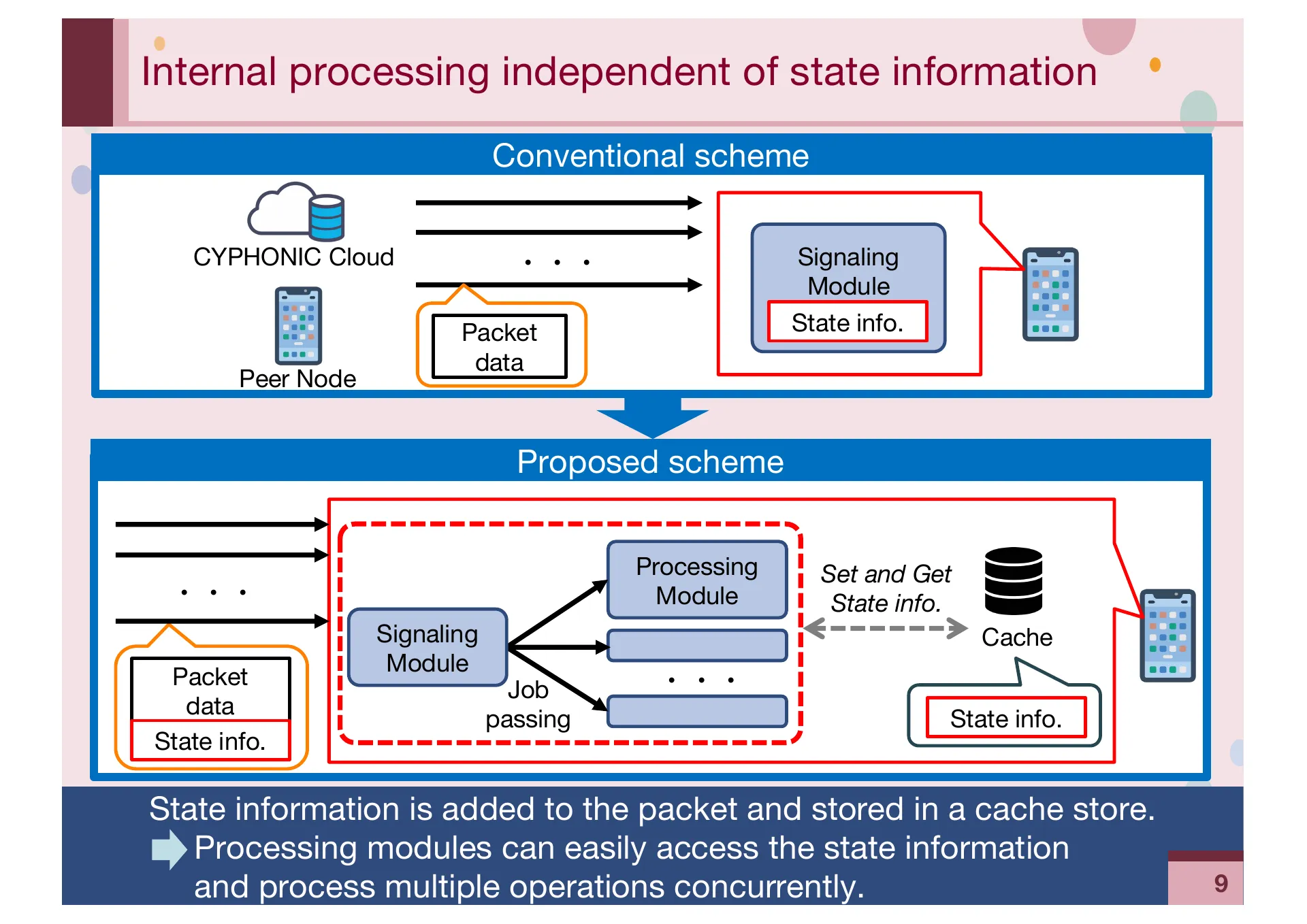

- P.9 — Proposed state information independence. Separation of state info from processing modules into an in-memory cache for multi-thread support.

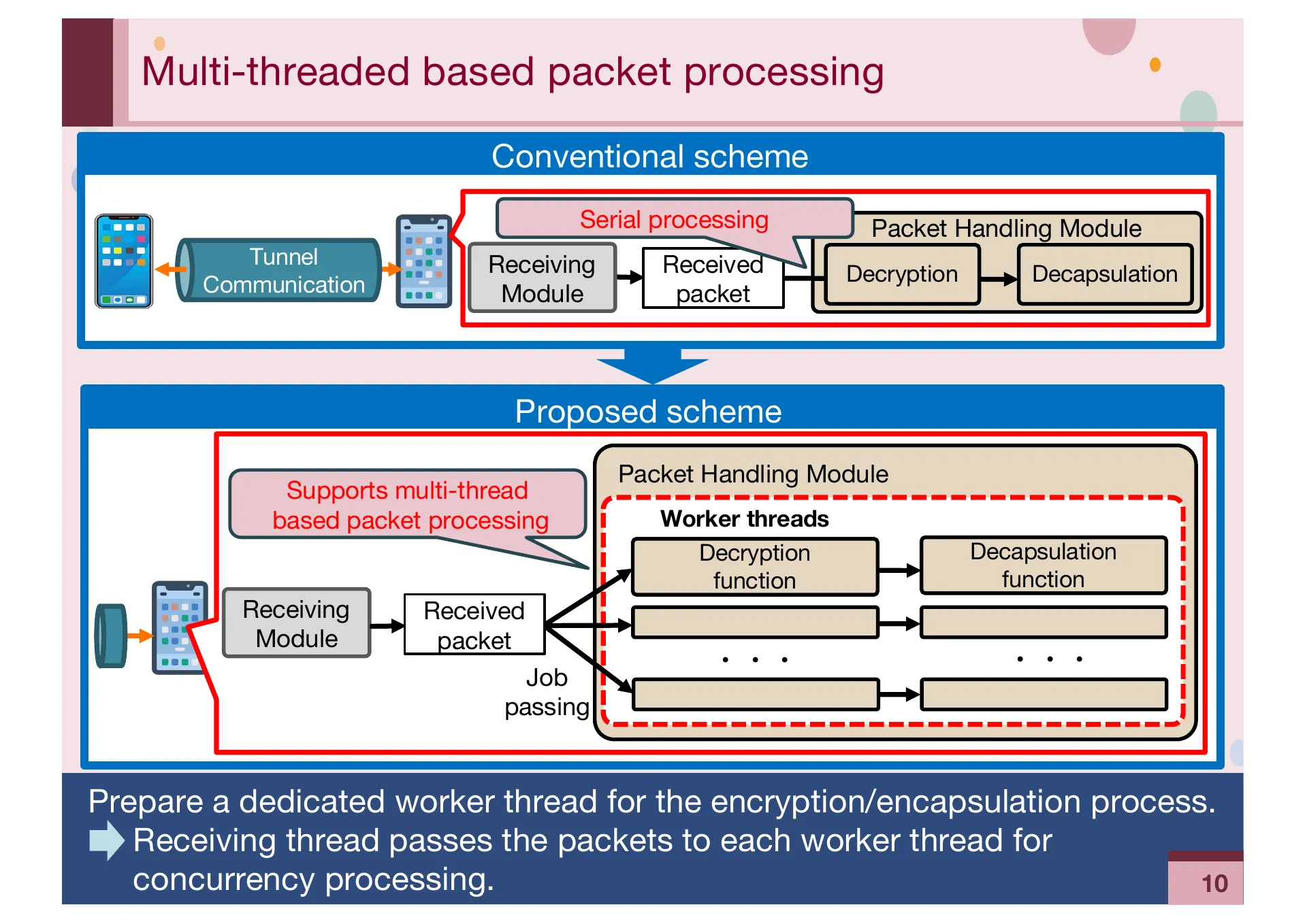

- P.10 — Proposed multi-threaded packet processing. Transition from serial processing to dedicated worker threads for parallel decryption and decapsulation.

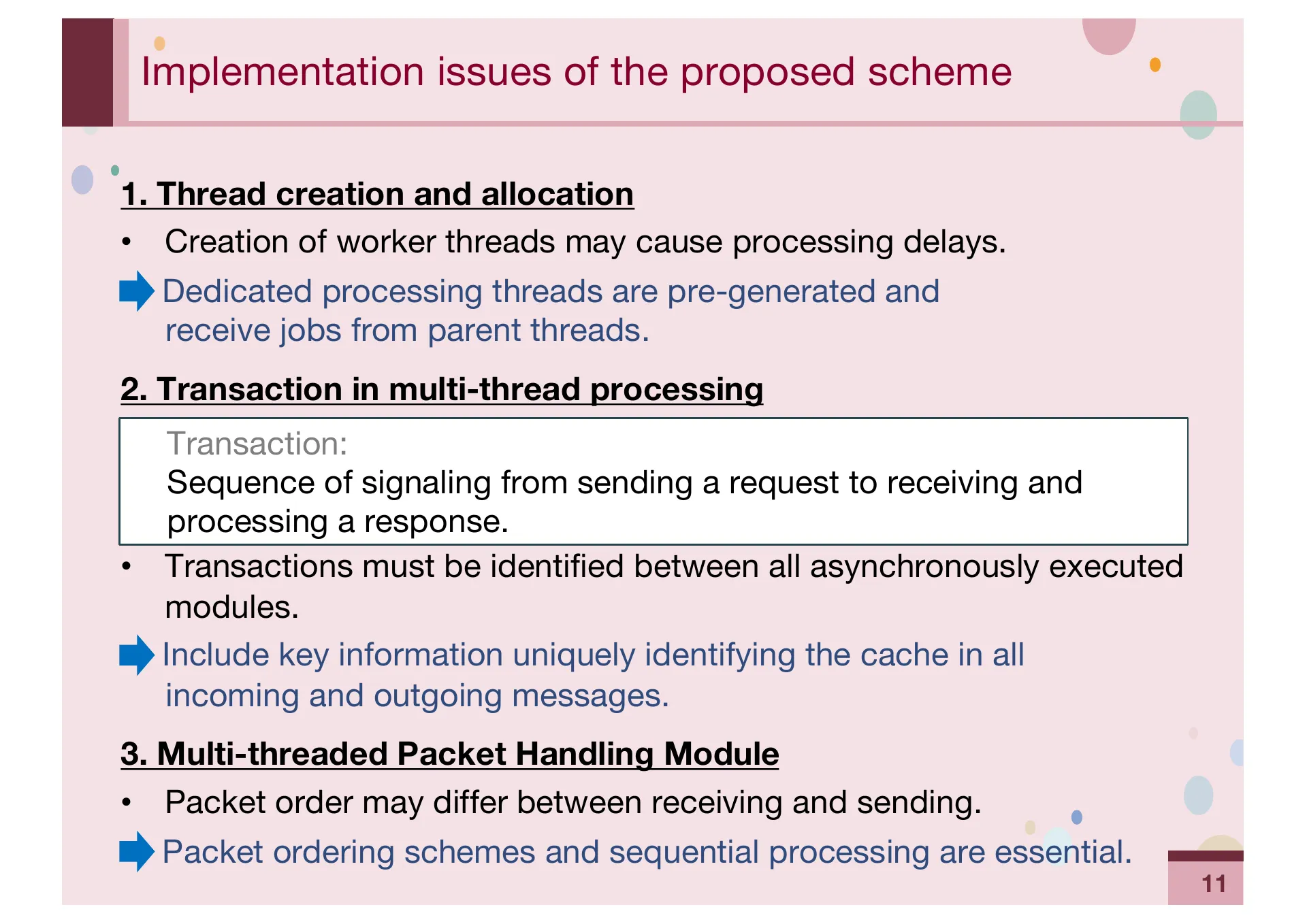

- P.11 — Implementation issues. Thread creation overhead, transaction identification across asynchronous modules, and packet ordering in multi-threaded processing.

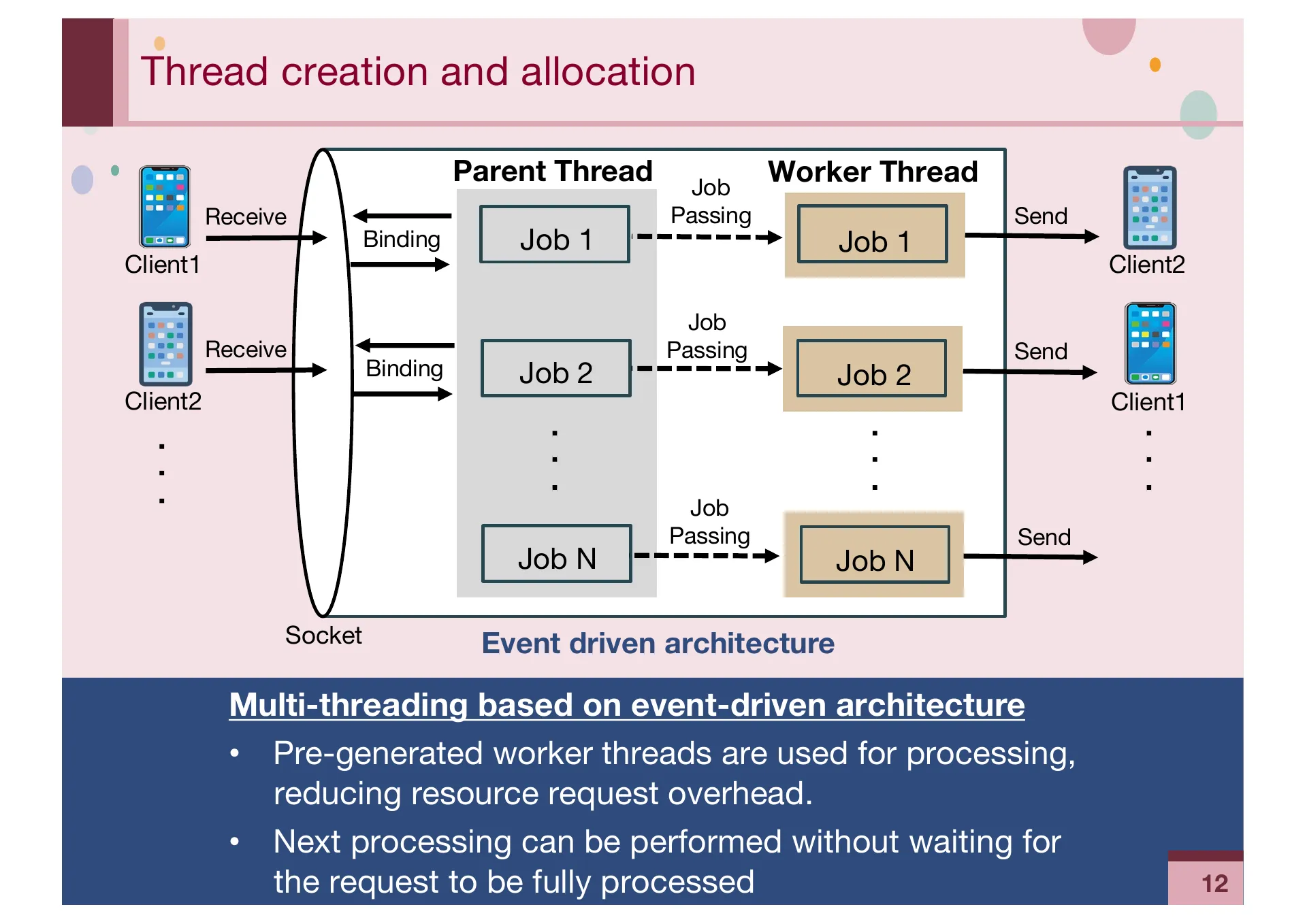

- P.12 — Thread creation and allocation design. Pre-generated worker threads receive jobs from parent threads to avoid creation overhead.

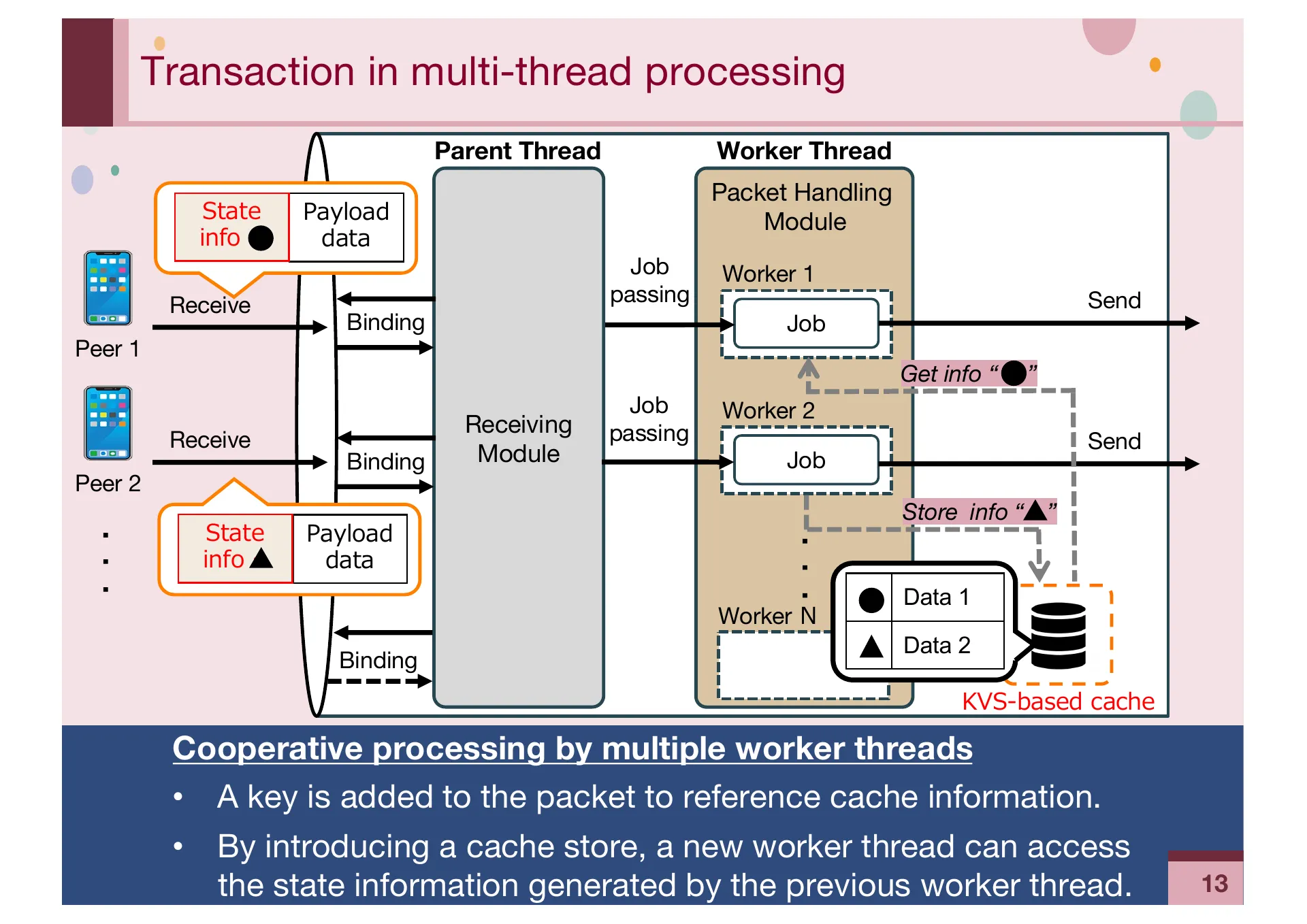

- P.13 — Transaction handling in multi-threaded processing. Cache-based state information storage and retrieval for consistency across asynchronous workers.

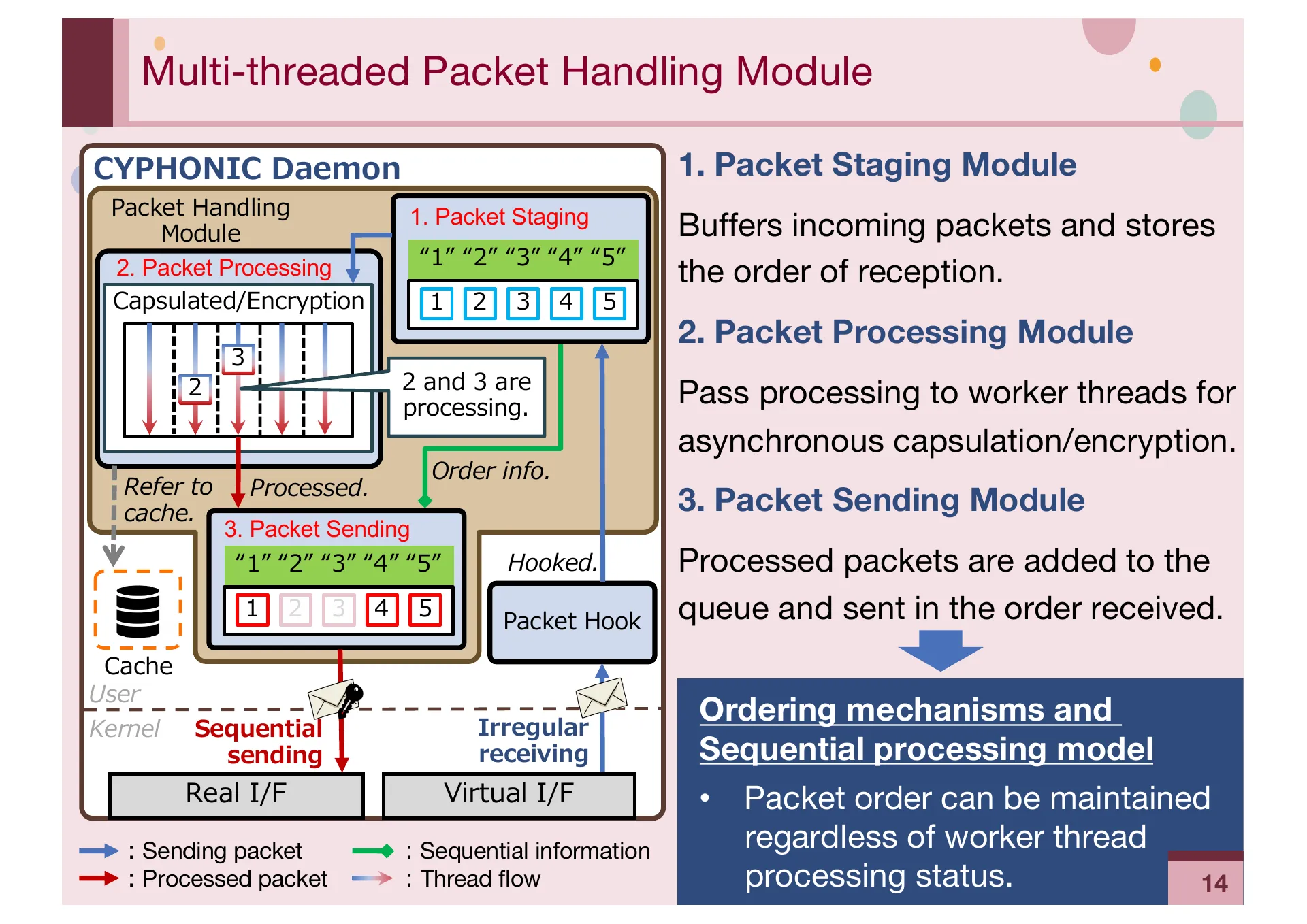

- P.14 — Packet ordering mechanism. Packet Staging, Processing, and Sending modules maintain reception order during asynchronous capsulation and encryption.

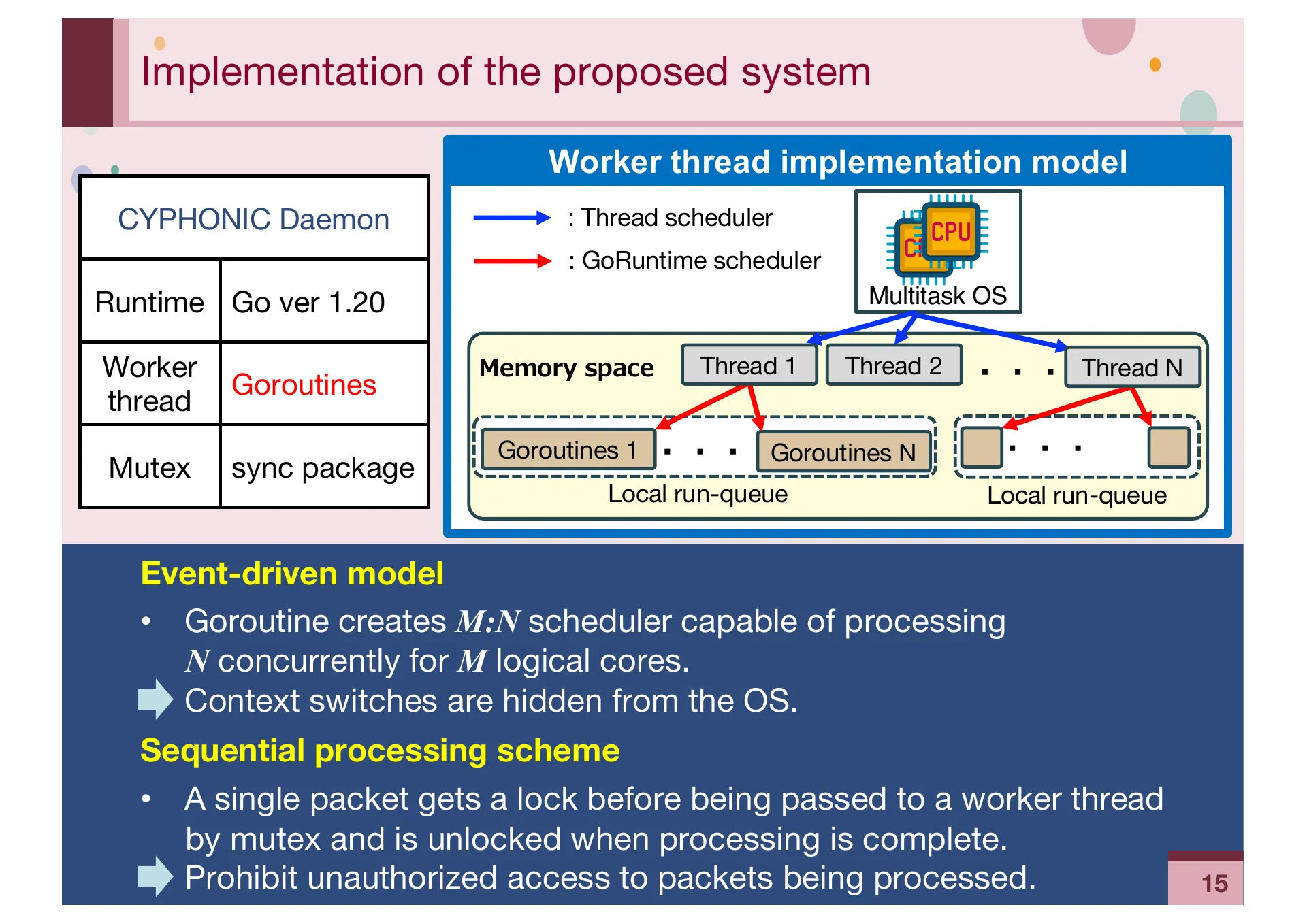

- P.15 — Implementation details. Go 1.20 Goroutines with M:N scheduling model and event-driven architecture for efficient concurrent processing.

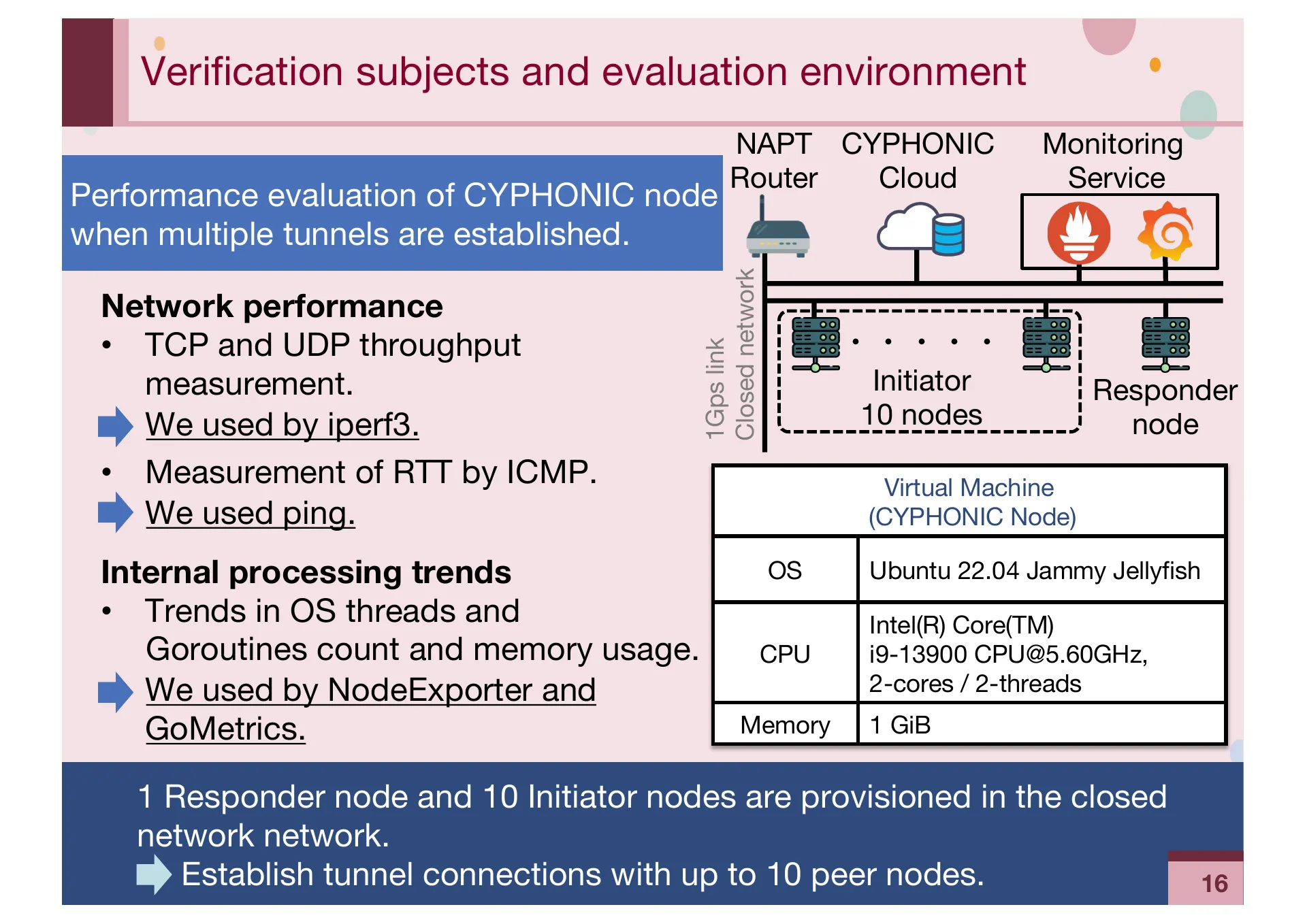

- P.16 — Verification environment. 10-node closed network setup measuring TCP/UDP throughput with iperf3 and RTT with ping.

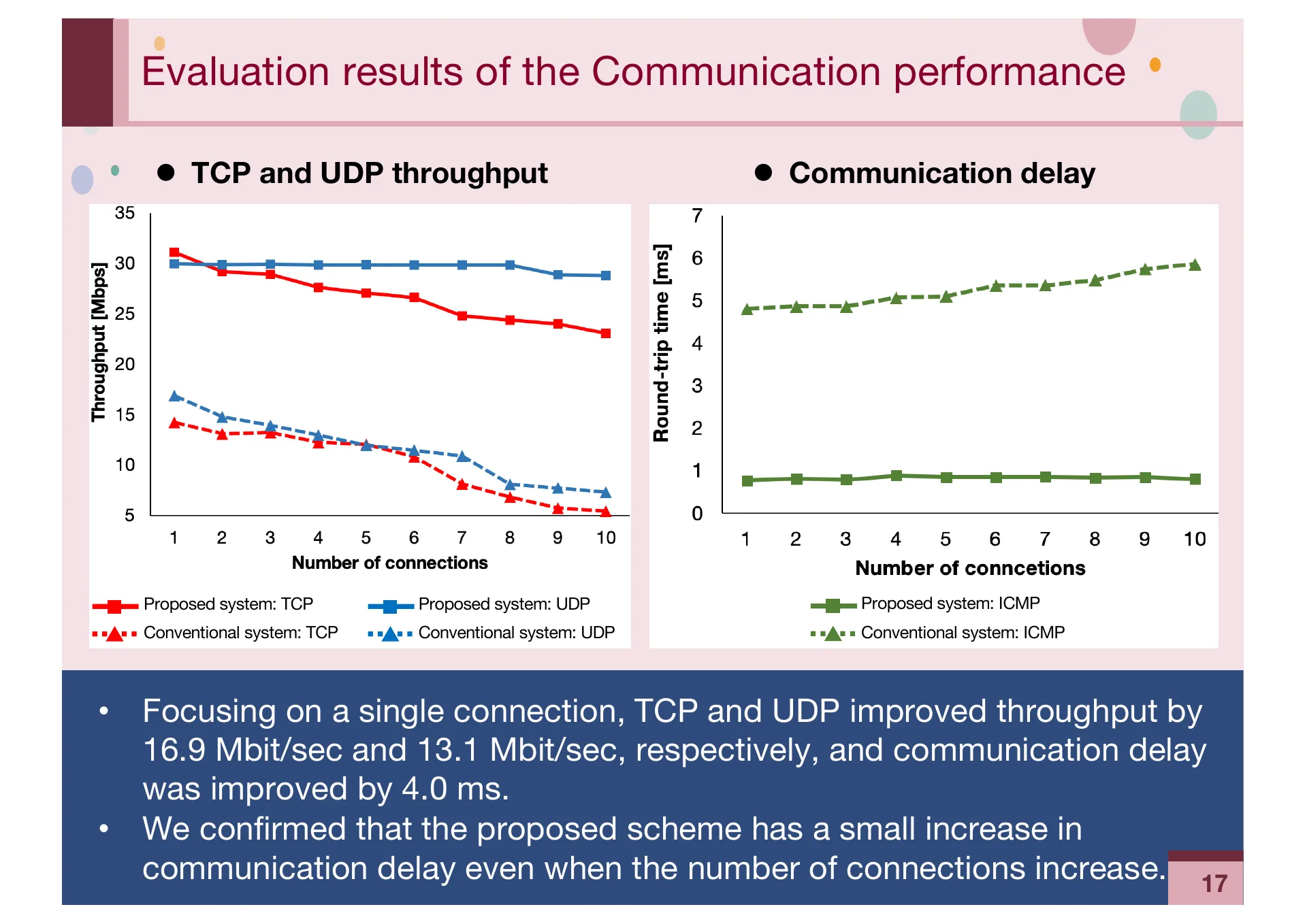

- P.17 — Communication performance results. TCP and UDP throughput improved by 16.9 and 13.1 Mbit/sec respectively, with 4.0 ms delay improvement.

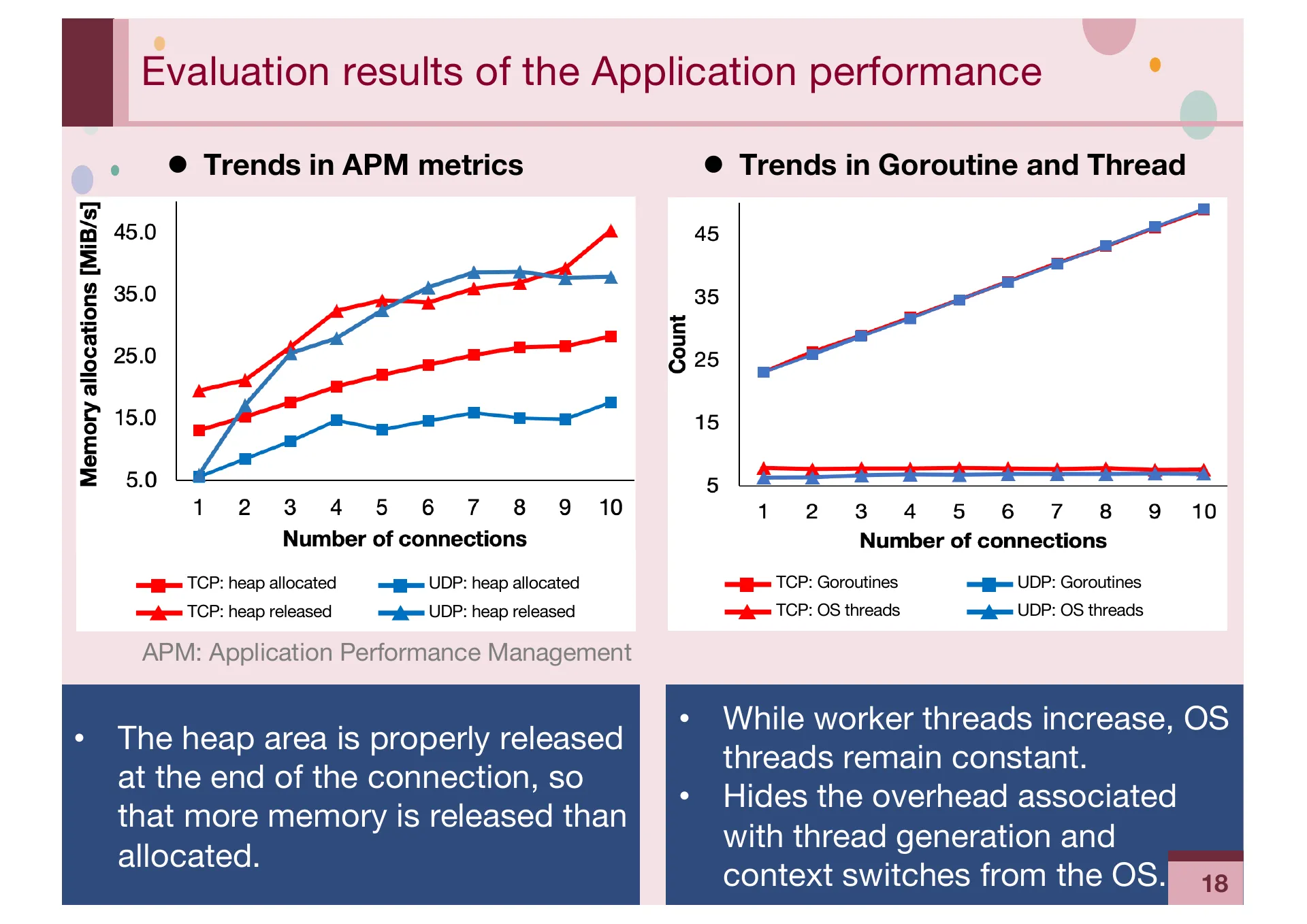

- P.18 — Application performance results. Proper heap memory release confirmed. OS threads remain constant while Goroutines scale with connections.

- P.19 — Conclusions. Proposed multi-thread scheme significantly improves throughput and maintains constant communication delay as connections increase.